Publications

Conferences & Journals

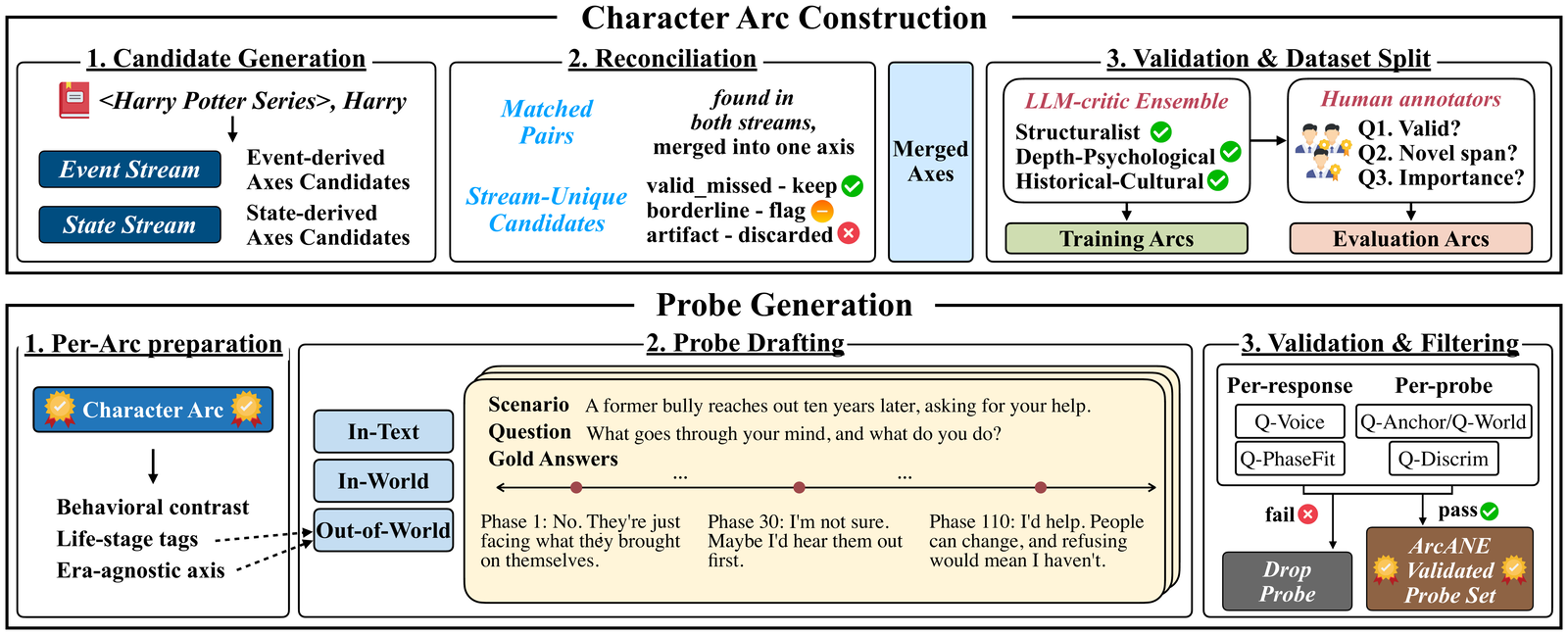

An automatically constructed benchmark of 17 novels and 80 principal characters that tests whether role-playing agents shift their behavior in step with a character's evolving psychology. A Character Arc segments the narrative into psychological phases, and conditioning on it beats every other context strategy on every model—with the largest gains on scenarios the source text never explores.

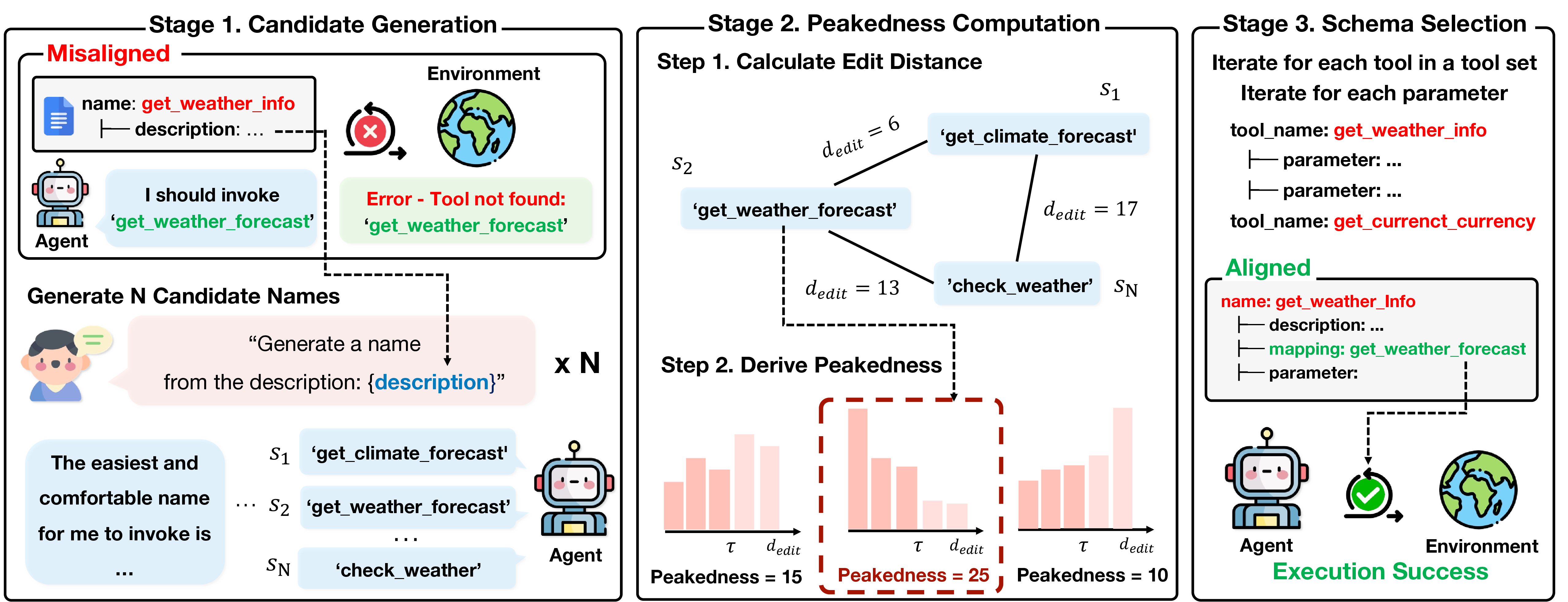

PA-Tool renames tool schema components to match small language models' pretraining vocabulary, improving tool-use accuracy by up to 17% without any model retraining.

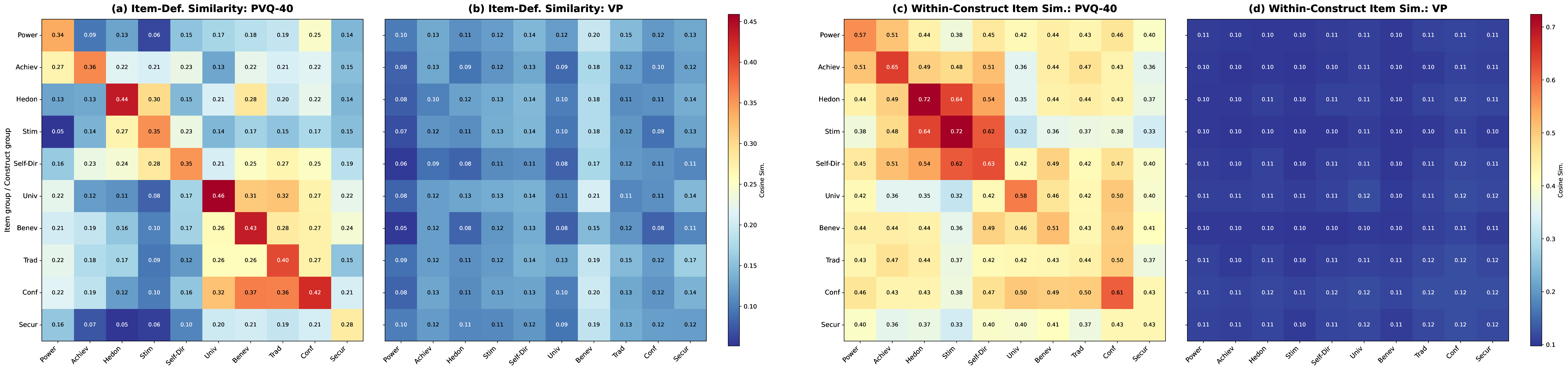

Reveals a gap between LLM psychological profiles measured by standard questionnaires and those observed in actual generation behavior, questioning the validity of questionnaire-based assessments.

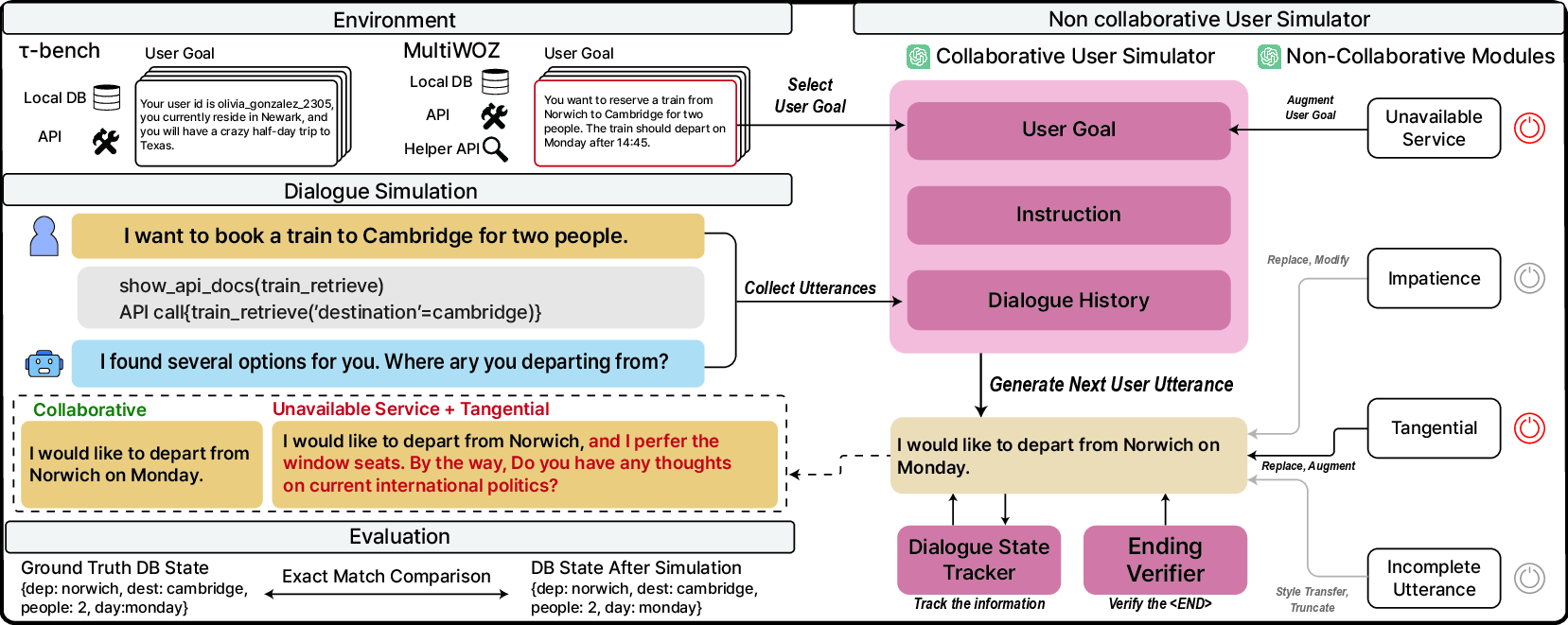

A user simulator that generates realistic non-cooperative behaviors, exposing significant performance drops in state-of-the-art tool agents under adversarial conditions.

Models trait-response mediators to turn LLMs into virtual survey respondents, enabling cost-effective validation of psychometric items across Big Five, Schwartz values, and VIA character strengths.

A three-level framework analyzing item memorization, evaluation memorization, and target score matching to quantify how data contamination undermines psychometric LLM benchmarks.

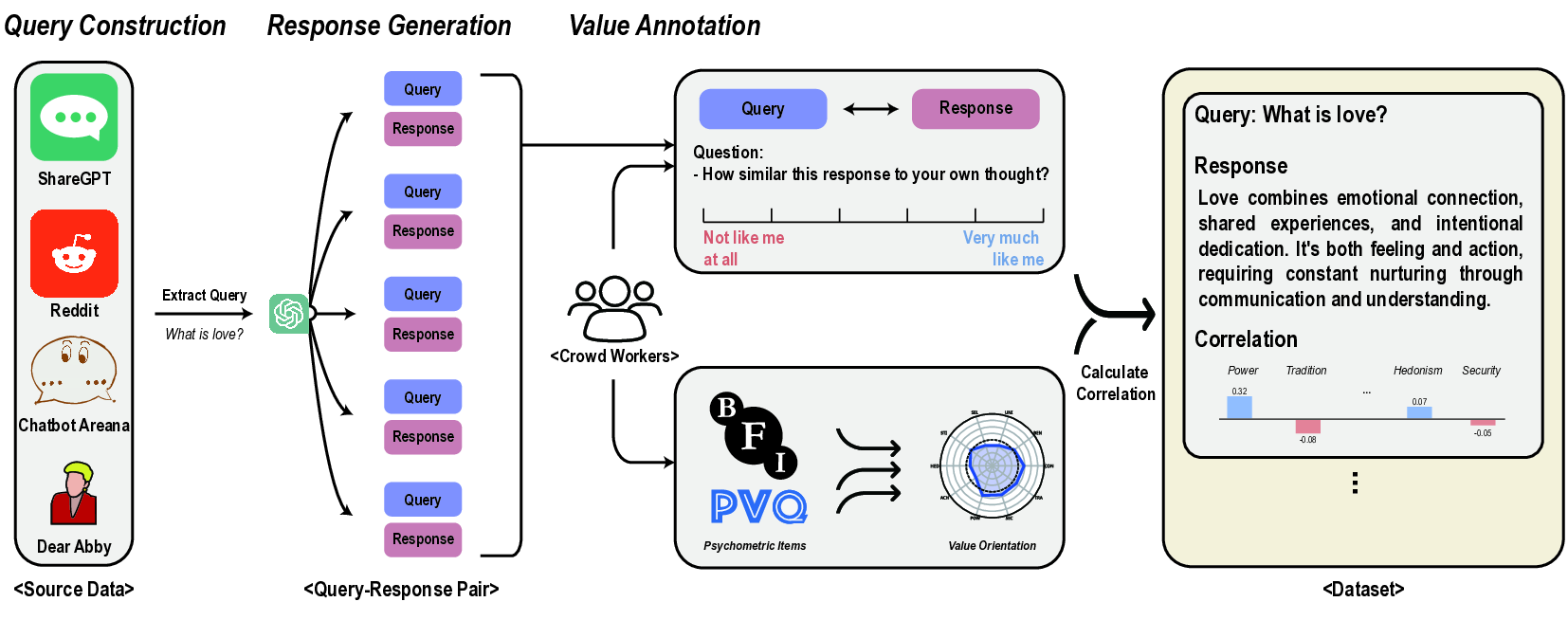

An evaluation framework grounded in real user-LLM interactions, finding that 44 LLMs consistently prioritize Benevolence and Self-Direction while undervaluing Tradition and Power.

Applies interpretable machine learning to real e-commerce transaction data to analyze private brand purchasing patterns across dog and cat owner segments, finding that dog owners respond more strongly to delivery convenience while cat owners show greater price sensitivity.

Domestic Publications

An LLM-based system for generating Korean college entrance exam English reading comprehension questions, combining abductive reasoning with RAG and knowledge distillation.

A cryptographic access control technique that encrypts AI model parameters for secure on-device deployment, leveraging the ELU activation function under homomorphic encryption.